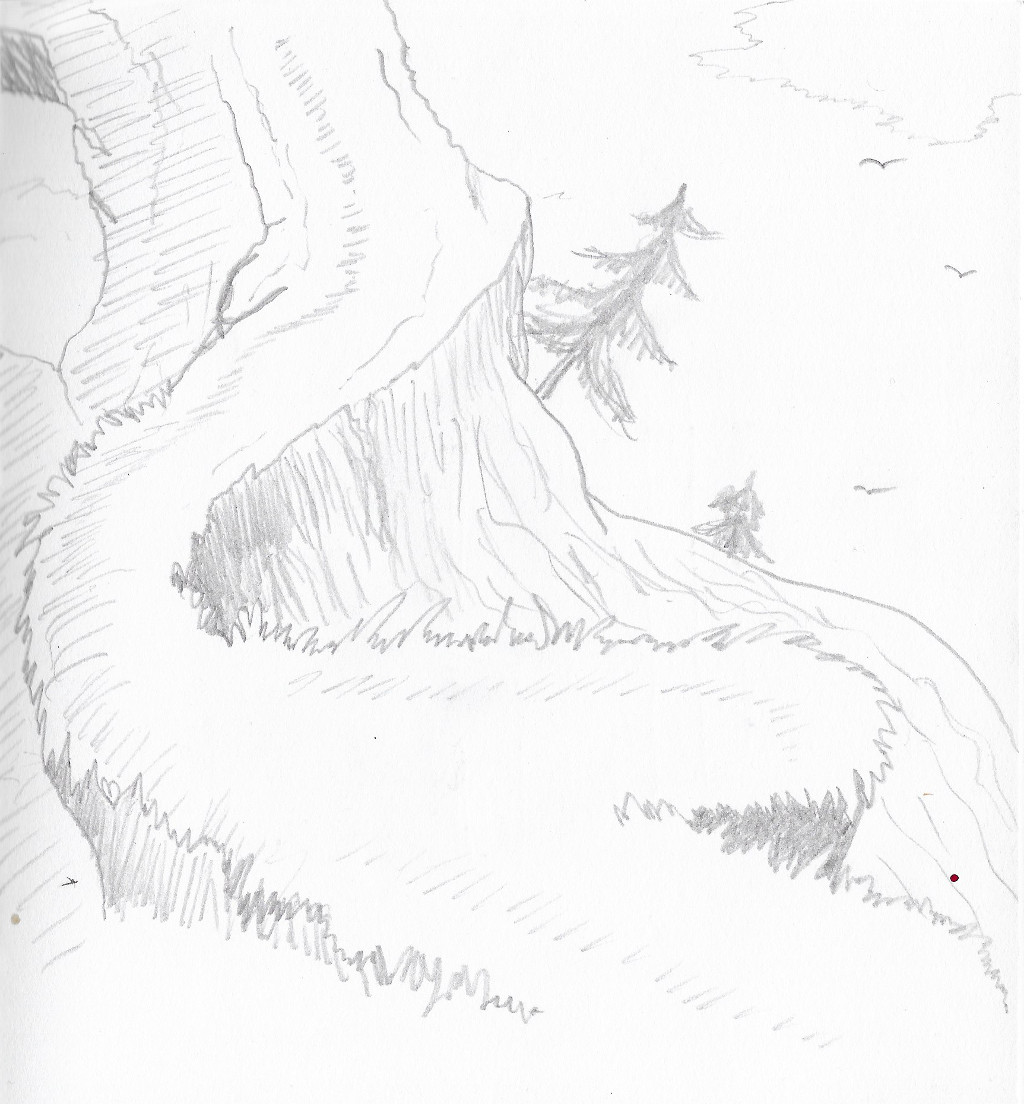

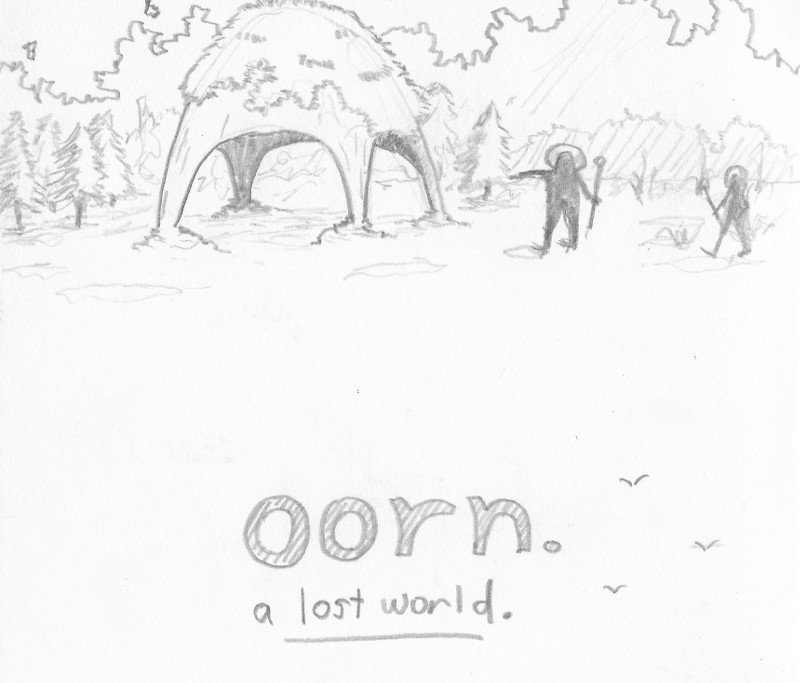

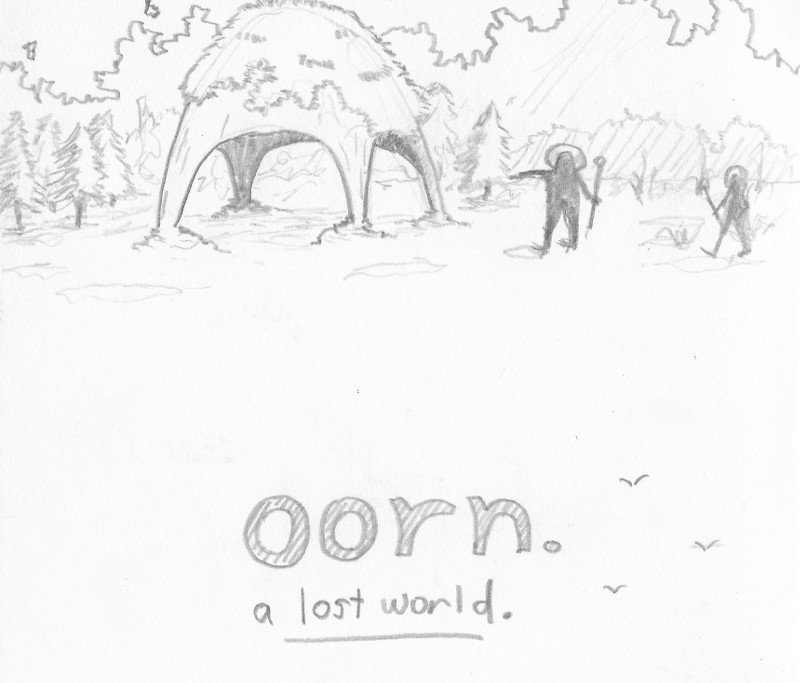

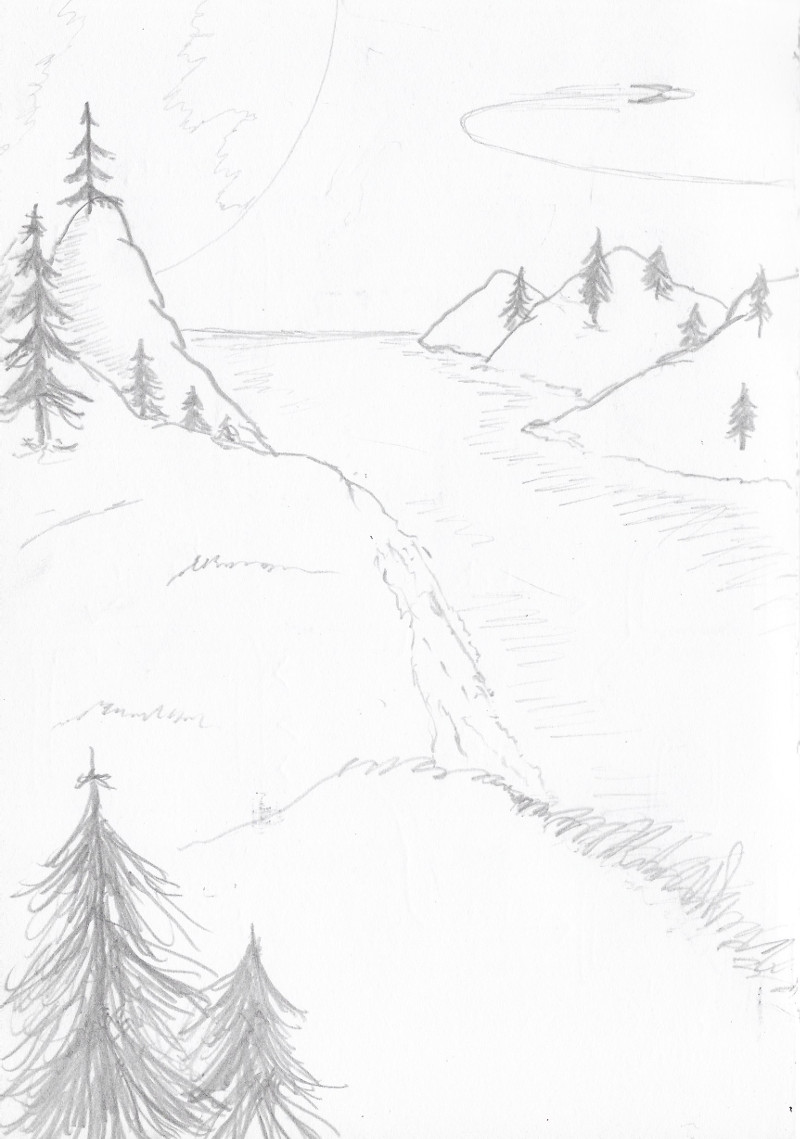

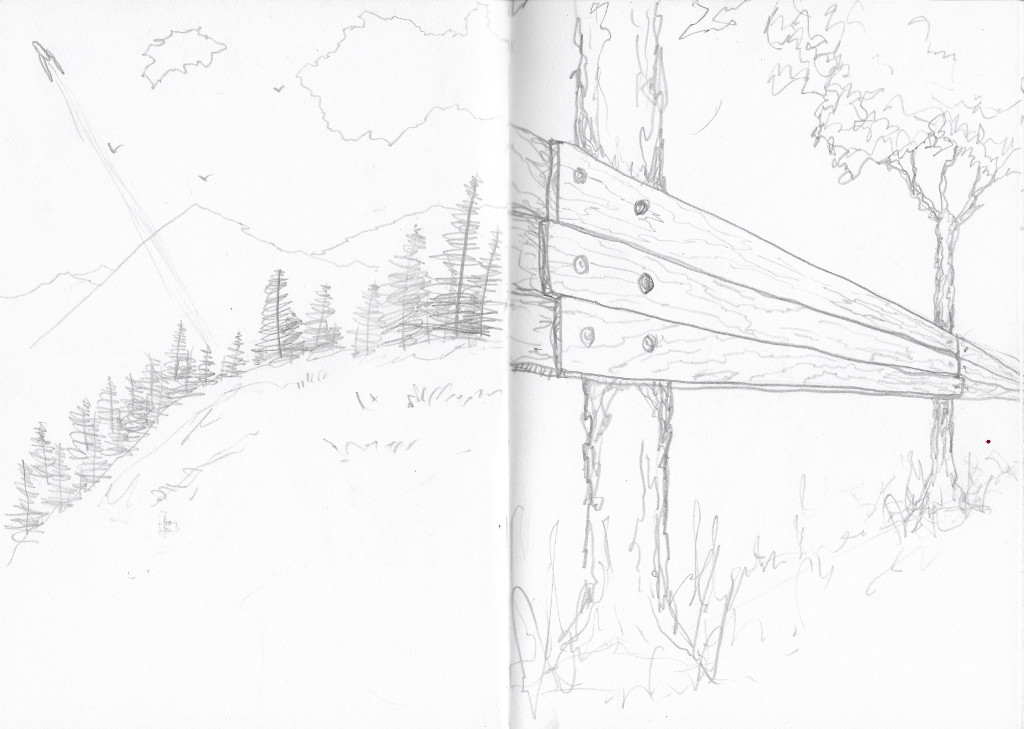

Recent-ish sketches. Been learning from The Etherington Brothers, Dr. Seuss, and How to Build Treehouses, Huts, and Forts.

Recent-ish sketches. Been learning from The Etherington Brothers, Dr. Seuss, and How to Build Treehouses, Huts, and Forts.

Web applications often follow a client-server model meaning that there is a piece of software which runs in your web browser (the client) and a piece of software which runs on a server somewhere. I'm interested in this model, where it came from, and where it's going, and I discuss this below. I'll also discuss a new model for self-hosting web applications that I've been exploring.

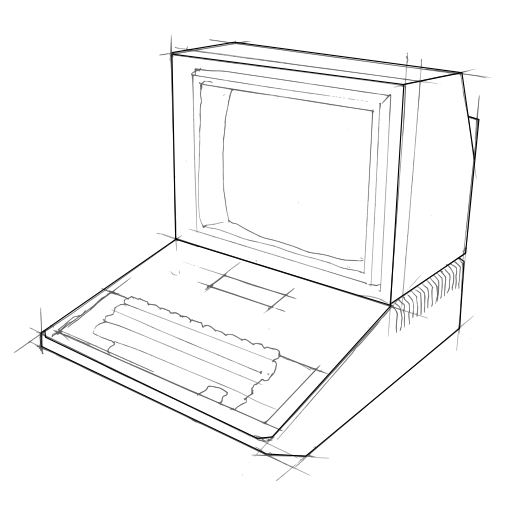

In the old days most software people ran was in the form of applications that you would download onto your PC and run. This was quite a decentralised model in that you could find whatever software you liked and run it on your machine without asking permission from anybody.

The modern mobile-device app stores and the web have deviated from this model. In the case of app stores you can only download software that is sanctioned by the company who runs the app store. On Android it is quite easy to change the settings on your device to install software applications from any source but this is not the default, and on Apple devices it's very difficult to do.

The web has a more complicated approach. Running software in your web browser is generally as easy as visiting a URL and people are free to run whatever websites and "web apps" they like on their devices. As mentioned above however, the server-side portion of the web application you are using is often running on somebody else's server computer and not in your control.

Over time more and more functionality has moved from server side code into the web browser. Applications like Gmail pioneered this transition. Recent browser technologies like WebRTC and service workers have given a stronger base to build client-only applications on. The "add to homescreen" paradigm, whilst relatively obscure, presents a vector for running web applications on your computers and devices in a similar way to installed applications.

The buzz-word "serverless" captures the zeitgeist: moving more and more of the software off the server and into the web browser client. The reason software developers are generally in favour of this move is because it is easier to deploy code to web browsers than it is to deploy code to servers; it's easier to get users to run that software (just send them a link); and there is less of a maintenance burden if you don't have to keep a server running.

There's another strong reason why client side code is more favourable than server side code and that is because most server code is running on servers you don't own. For example when you run Gmail the server code and all of your emails are completely controlled by a third party and trusted third parties are security holes.

Even "serverless" in the context of web apps just means that the server component requires less infrastructure and is easier for developers to deploy. The code is still running on a machine owned by some third party who is neither the user or the developer.

Whilst this move towards client-side web applications is good in terms of user control, a problem even with "serverless" web applications that run in your browser is that the software can be modified without your consent. When you load up a web application tomorrow the developer might have made a new version with features you don't like and your browser will load the new version automatically even if you wanted the old version. Even worse, because you load a fresh copy of the software each time you use it, a man-in-the-middle attacker might sneak a malicious bit of code into the version you load tomorrow that is not there today.

There are also many use-cases where only a piece of server-side software will do. Generally these use-cases fall under the umbrella of asynchronous communication, such as storing a file for later retrieval, sending a message to one or more people which might only be received when you are offline, syncing state between devices which might be connected at different times etc.

Examples of things the server code will typically take care of:

So the world is trending towards a more fragile situation of centralized control of the software we all rely on. It would be better for humanity if we were instead moving towards decentralized models of software where an individual can run and control the software they want without fear of a fragile centralized system failing or being used against them by powerful interests and hackers.

So these latter problems are some of the reasons why self-hosting web applications is still a good move in terms of security and control. When self-hosting, all of the software and all of the data is running on your own computers and you don't have to trust any third party to not be evil, corrupt, make mistakes etc. You can choose which software to install and run and when without obstruction. You get the benefits of cloud connected software - shared state between devices and other users - without having to trust a third party.

The main problem with the self-hosting approach is that it is very technical and therefore simply not an option for most users. Some of the barriers to people self-hosting their web software stacks:

These are the types of things that systems administrators are paid a lot of money to take care of because they are difficult and skillful work. These are the things that big companies are doing for you when you use their online services. The unwritten contract we engage in today is that we give big companies control over our digital life and our valuable personal data in exchange for their building, installing, and maintaining the infrastructure code on their servers.

An additional problem is that even in the "host your own server" model there is a lot of centralization and passing around of valuable and private personal information. For example obtaining a domain name and SSL certificate requires central authorities who almost always require a lot of personal information. Not to mention forking over cash. Additionally, even if you host your code on a VPS - as is the case with almost all server side code on the internet - then some trusted 3rd party has unlimited access to the machine you are renting.

So the interesting question is whether there is some way to make self-hosting possible for everybody in the same way that PCs and phones made "run the software I want" easy for everybody.

There was a time when a lot of web applications were deployed as simple PHP scripts with HTML, CSS & Javascript for the front-end. This was probably the simplest format for self-hosted applications as it was easy to find hosting providers who would let you run PHP, and installation was as simple as copying files up to a server. I think this is a big reason why PHP has remained popular for so long despite the fact that it's such an awful language with frequent security issues. It simply let you do what you want. These days though, a lot of modern software on the internet is not written in PHP and developers often prefer other langauge ecosystems.

Another idea for self-hosting was the Freedom Box pioneered by Free Software enthusiasts in the 2000s. The idea is pretty good but does not seem to have acheived widespread adoption. My guess is the use cases are still too obscure for most users. what does "run your own XMPP server" mean? Most people have no idea.

A lot of people today are of the mind that "blockchain" is the singular solution to the issue of decentralizing the software we run. In this model you pay in small increments for the server side code which runs on a distributed system shared amongst many participants. You maintain control of your piece of the distributed service and your data stored in it using cryptography. In this model the server component and app state is shared across many computers all at once and you basically pay for a small slice of it.

This is a fascinating idea but I am not completely convinced that "blockchain" will be the future of decentralized software applications because:

In addition to the above ideas there exist new ecosystems like ownCloud and Sandstorm which enable people to deploy their own "stacks" hosted on their own servers. These are noble efforts at decentralization and self-hosting into which many people have poured a lot of work. The problem with these "host-it-yourself cloud stacks" is that they often still require trust in some 3rd party hosting service, and quite deep technical knowledge. Sure, to a nerd it is easy to upload a PHP script and configure a MySQL server, purchase VPS hosting, install a Let's Encrypt SSL certificate, but it is not always so easy to a nerd's mother, father or friend.

I've been thinking about all of this in recent years. I've been asking myself these questions:

What if running persistent server side code was available to anybody, even non-technical users? What if installing server side software was as click-tap easy as it is on a phone or PC? What if you could run cloud software and servers on the desktop PC in your office, or tablet, or Raspberry Pi on your home network? What does it look like when anybody in the world can self-host their "cloud" software?

Criteria for such a system would be:

As I've thought about these ideas I've also been tinkering with WebTorrent. It's a cool piece of technology which is bringing a chunk of the BitTorrent architecture to the web.

Working with this technology made me realise something the other day: it's now possible to host back-end services, or "servers" inside browser tabs.

Instead of a VPS server running in some data center, you have a browser tab running on a spare computer. Instead of clients talking to servers over HTTP they can talk over WebRTC. You build your "backend" service code with the same tech as your client side web app, with HTML and Javascript.

When I realised this it blew my mind. I couldn't stop thinking about it. Maybe the persistent browser tab on the home PC can be the new server for self-hosting users? What if grandma could build out a server as easily as opening a browser tab and leaving it running?

So anyway, I've made this weird thing to enable developers to build "backend" services which run in browser tabs. It's called Bugout and it has been fun to hack together.

Check it out and let me know what you think.

Speccy is a small utililty I built for live-coding chiptune music in the browser with Clojurescript.

You can copy sounds from sfxr.me and paste them in as synth definitions, using code to modify any parameter over time, or start from scratch by building a synth up from basic parameters.

You can paste the following examples into the online editor to try it out:

; blippy synth

(sfxr {:wave :square :env/decay 0.1 :note #(at % 32 {0 24 3 29 7 60 12 52 19 29 28 52})})

; donk bass

(sfxr "1111128F2i1nMgXwxZ1HMniZX45ZzoZaM9WBtcQMiZDBbD7rvq6mBCATySSmW7xJabfyy9xfh2aeeB1JPr4b7vKfXcZDbWJ7aMPbg45gBKUxMijaTNnvb2pw"

{:note #(or

(at % 57

{5 35

27 34})

(at % 32

{0 24

6 29

18 21

26 12}))})

; hi hat

(sfxr {:wave :noise

:env/sustain 0.05

:env/decay 0.05

:vol #(sq % [0.3 0.1 0.2 0.1])})

; snare

(sfxr "7BMHBGCKUHWi1mbucW62sVAYvTeotkd4qSZKy91kof8rASWsAx1ioV4EjrXb9AHhuKEprWr2D4u4YHJpYEzWrJd8iitvr23br2DCGu7zMqFmPyoSFtUEqiM64"

{:note 36

:vol #(at % 16

{4 0.5

12 0.5})})

The full source code and documentation is available at GitHub.

Enjoy!

Here are some illustrated explanations of the main ways in which cryptographic hash functions can be attacked, and be resistant to those attacks.

Zooko Wilcox's blog post Lessons From The History Of Attacks On Secure Hash Functions gives us a nice overview of these and I've quoted his concise explanations below. Check out his great post for more detail and history on this topic.

A cryptographic hash function is an important building block in the cryptographic systems that keep us safe in our communications on the internet.

A hash function takes some input data and generates a hopefully unique string of bits for each different input. The same input always generates the same result.

The input to a secure hash function is called the pre-image and the output is called the image.

I use the following key below:

Red for inputs which can be varied by an attacker.

Green for inputs which can't be varied under the attack model.

To attack a hash function the variable inputs are generally iterated on in a random or semi-random brute-force manner.

A hash function collision is two different inputs (pre-images) which result in the same output. A hash function is collision-resistant if an adversary can't find any collision.

A hash function is pre-image resistant if, given an output (image), an adversary can't find any input (pre-image) which results in that output.

A hash function is second-pre-image resistant if, given one pre-image, an adversary can't find any other pre-image which results in the same image.

Hopefully these diagrams help to clarify how these attacks work!